For Amazon sellers and developers building e-commerce tools, accessing accurate marketplace data is the foundation of competitive strategy. Whether you are tracking the Buy Box, analyzing competitor pricing, or managing your own inventory, the method you choose to extract this data dictates your operational speed, your development costs, and ultimately, your market advantage. In 2026, the landscape of amazon seller data access is defined by a fundamental choice: integrating with Amazon's official Selling Partner API (SP-API) or utilizing dedicated scraping APIs.

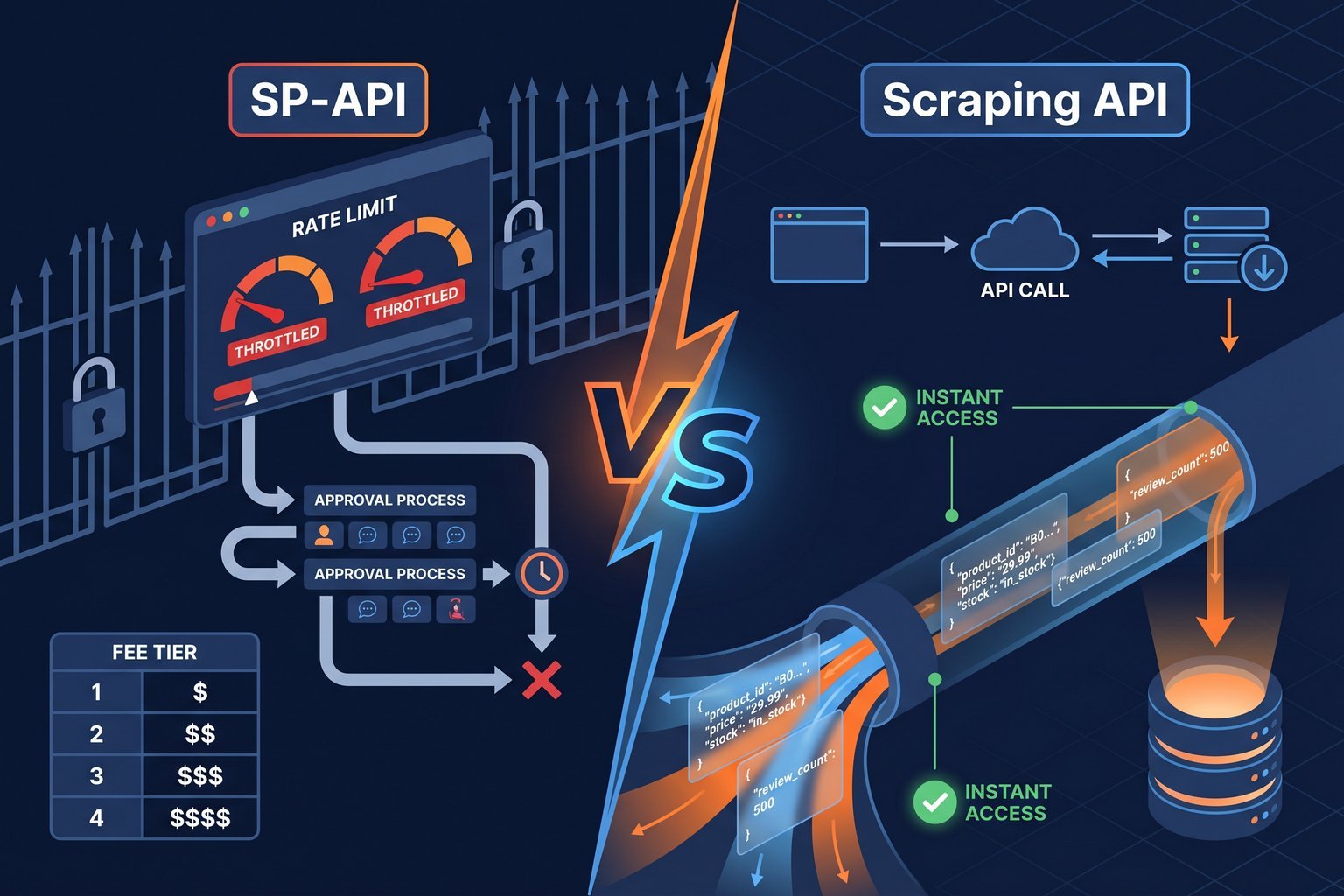

Both approaches promise to deliver structured Amazon data, but their architectures, intended use cases, and limitations are vastly different. The SP-API is designed primarily for sellers to manage their own accounts, while scraping APIs are built for broad market intelligence and competitive analysis. This comprehensive guide compares amazon sp-api vs scraping, examining the realities of rate limits, approval processes, the impending fee structures, and why many developers are increasingly turning to dedicated scraping solutions like Easyparser for their data pipelines.

Understanding the Amazon Selling Partner API (SP-API)

The Amazon Selling Partner API (SP-API) is the official, modernized replacement for the legacy Amazon Marketplace Web Service (MWS). It provides sellers and registered developers with programmatic access to a seller's own data, including orders, shipments, payments, and inventory. When a seller authorizes an application, the SP-API allows that application to perform actions on the seller's behalf, such as updating prices or acknowledging order fulfillment.

For operations that strictly involve managing a seller's internal account mechanics, the SP-API is the correct and necessary tool. It offers authenticated, structured endpoints for tasks that require write access to the Seller Central ecosystem. However, when the requirement shifts from internal management to external market intelligence such as monitoring competitor prices across thousands of ASINs or analyzing category-wide sales trends the limitations of the SP-API become immediately apparent.

The Critical Limitations of SP-API for Market Intelligence

While the SP-API is robust for internal seller operations, developers attempting to use it as a general-purpose data extraction tool face significant structural barriers. These limitations are not bugs; they are intentional design choices by Amazon to protect their infrastructure and control data flow.

1. Restrictive Rate Limits and Throttling

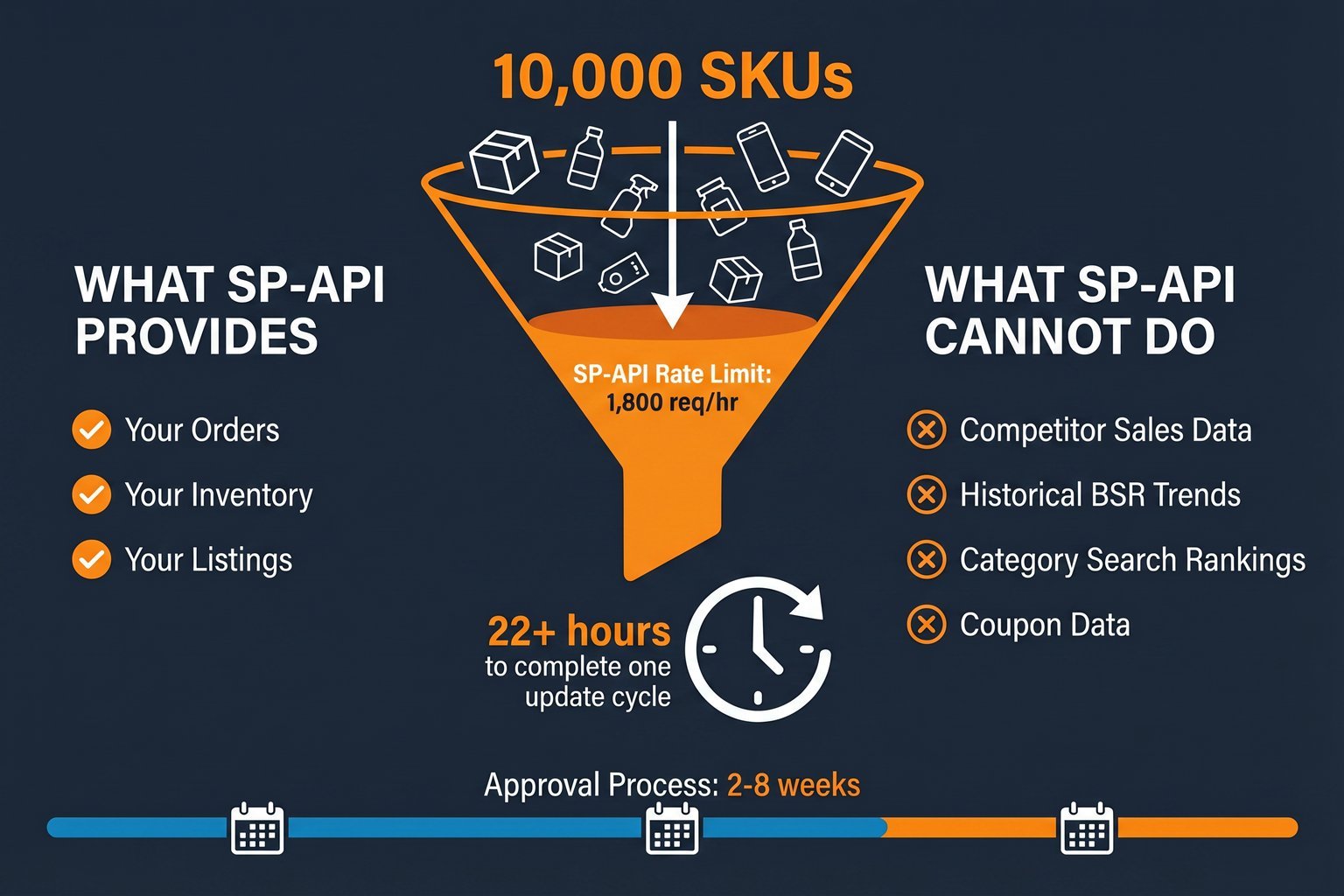

The most immediate hurdle developers encounter with the SP-API is its aggressive rate limiting. Amazon enforces strict quotas on how many requests an application can make per second. For example, the getCompetitivePricing endpoint, which is crucial for monitoring the Buy Box, is typically limited to 0.5 requests per second with a burst capacity of just 1 request. This translates to a maximum of 1,800 requests per hour.

If you are a seller attempting to monitor 10,000 competitor SKUs, a single API call per SKU is rarely sufficient. You often need to combine pricing data with inventory status and Buy Box ownership, requiring multiple calls per product. Monitoring those 10,000 SKUs could easily require 40,000 API requests. At 1,800 requests per hour, this simple update cycle takes over 22 hours to complete, rendering real-time pricing intelligence impossible. This severe throttling is a primary reason why developers seek an amazon mws alternative for competitive tracking.

2. The Grueling Approval Process

Gaining access to the SP-API is not a simple matter of generating an API key. Developers must navigate a rigorous, multi-step approval process that can take weeks or even months. The process involves creating a Developer Profile, detailing the application's purpose, and undergoing security assessments. If the application requires access to Personally Identifiable Information (PII), such as customer shipping addresses, the developer must pass extensive Data Protection Policy (DPP) audits, including Vulnerability Assessments and Penetration Testing (VAPT).

This high barrier to entry significantly delays time-to-market for new tools and internal dashboards. For teams that need to deploy a pricing monitor or a sales analysis tool quickly, the bureaucratic friction of the SP-API approval process is a major deterrent.

3. The Impending 2026 Fee Structure

Historically, the SP-API has been free to use. However, Amazon announced a complex, tiered fee structure intended for implementation in 2026. While the exact rollout date was recently delayed indefinitely due to developer pushback, the proposed structure reveals Amazon's direction. The plan introduces an annual fee of $1,400 for all third-party developers, coupled with monthly usage tiers.

The proposed tiers are expensive: the Pro tier at $1,000 per month for 25 million GET calls, the Advanced tier at $5,000 per month for 125 million GET calls, and overage charges of $0.40 per 1,000 calls. For applications that rely on high-volume polling to track market changes, these fees transform the SP-API from a free utility into a significant operational expense, forcing developers to reconsider their data acquisition strategies.

4. Incomplete Competitive Data

Perhaps the most fundamental limitation of the SP-API for market research is its scope. The API is designed to provide data about a seller's own listings and the immediate competition on those specific ASINs. It does not provide access to the broader Amazon catalog. You cannot use the SP-API to scrape Amazon search results, analyze category-wide Best Sellers Rank (BSR) trends, extract detailed customer reviews, or retrieve "bought in past month" statistics for products you do not sell.

Furthermore, critical competitive data points, such as active coupon strings or historical sales volume estimates for competing products, are entirely absent from the SP-API endpoints. If your business strategy relies on comprehensive market visibility, the SP-API simply does not provide the necessary data.

The Scraping API Alternative: Broad Access and High Throughput

To overcome the limitations of the SP-API, developers utilize dedicated scraping APIs. These services act as an intermediary layer, programmatically extracting public data directly from Amazon's storefront pages and returning it in structured JSON format. Unlike the SP-API, which connects to the backend seller database, scraping APIs interact with the frontend, capturing exactly what a consumer sees.

This approach offers several distinct advantages for competitive intelligence and market research.

Immediate Access Without Bureaucracy

Scraping APIs eliminate the grueling approval process associated with the SP-API. Developers can typically sign up, generate an API key, and begin extracting data within minutes. There are no Developer Profiles to submit, no security audits to pass, and no weeks spent waiting for Amazon's approval. This immediate access accelerates development cycles and allows teams to deploy data-driven tools rapidly.

Unrestricted Catalog Visibility

Because scraping APIs extract data from the public storefront, they are not limited to a specific seller's inventory. Developers can retrieve data for any ASIN, search term, or category on Amazon. This enables comprehensive market research, allowing sellers to analyze competitor pricing strategies, track keyword rankings, and monitor category trends without restriction.

High-Volume Throughput

Professional scraping APIs are built to handle massive scale. They manage the complex infrastructure required to extract data reliably, including proxy rotation, CAPTCHA solving, and request retries. This allows developers to execute high-volume data extraction tasks such as monitoring tens of thousands of ASINs hourly without hitting the restrictive rate limits that cripple SP-API applications.

Navigating the 2026 Compliance Landscape

It is important to note that the regulatory environment for automated tools is evolving. In March 2026, Amazon updated its Business Solutions Agreement (BSA) to include strict new policies regarding AI agents and automated tools. These rules explicitly prohibit the use of unauthorized bots that mimic human browsing to manipulate the platform or execute actions without human oversight.

However, extracting publicly available data for market research remains a distinct practice from using bots to automate actions within a seller account. While Amazon actively employs technical measures to block generic web scrapers, utilizing a specialized, compliant data extraction service ensures that your market intelligence gathering operates reliably without violating the rules governing automated account management.

Why Easyparser is the Superior Solution for Amazon Data

When evaluating amazon sp-api vs scraping solutions, Easyparser stands out as a purpose-built API designed exclusively for Amazon data extraction. It bridges the gap between the restrictive SP-API and the unreliability of generic web scrapers, providing a robust, scalable infrastructure for developers and sellers.

Easyparser offers dedicated operations that map directly to the needs of Amazon businesses. Instead of writing complex parsing logic, developers can call specific endpoints for exactly the data they need. The DETAIL operation for product page data returns comprehensive product page data, the OFFER operation for Buy Box details lists all sellers and Buy Box details, and the SEARCH operation for keyword ranking data provides keyword ranking data. Crucially, Easyparser provides data points unavailable through the SP-API, such as the SALES_ANALYSIS operation for historical sales data, the BEST_SELLERS_RANK operation for rank tracking, and the PACKAGE_DIMENSION operation for precise FBA fee calculations.

Furthermore, Easyparser's pricing model is transparent and predictable. Unlike the complex, tiered structure proposed for the SP-API, Easyparser operates on a simple principle: 1 credit equals 1 successful product result. There are no hidden multipliers or unpredictable compute costs. This allows teams to budget their data acquisition accurately, whether they are tracking a hundred ASINs or a million.

Integrating Easyparser into a Python application is straightforward, as demonstrated by this real-time product detail request:

import requests

API_KEY = "YOUR_API_KEY" # Get your key from app.easyparser.com

ASIN = "B098FKXT8L"

params = {

"api_key": API_KEY,

"platform": "AMZ",

"operation": "DETAIL",

"asin": ASIN,

"domain": ".com"

}

response = requests.get("https://realtime.easyparser.com/v1/request", params=params)

data = response.json()

product = data.get("product", {})

print(f"Title: {product.get('title')}")

print(f"Price: ${product.get('price')}")

print(f"Rating: {product.get('rating')} stars")

For applications requiring high throughput, Easyparser's Bulk API allows developers to submit up to 5,000 URLs in a single request, utilizing webhooks to deliver the data asynchronously. This architecture is essential for building scalable competitive intelligence platforms that the SP-API simply cannot support.

100 free credits, no credit card required.

Conclusion

The choice between the Amazon SP-API and scraping APIs is not a matter of one being universally better than the other; it is a matter of selecting the right tool for the specific task. If your goal is to build an application that manages a seller's internal operations such as fulfilling orders or updating account settings the SP-API is the mandatory, authenticated channel. However, if your objective is to gather comprehensive market intelligence, monitor competitor pricing at scale, or extract data from the broader Amazon catalog, the restrictive rate limits, lengthy approval processes, and limited scope of the SP-API make it an inadequate solution.

For these critical data acquisition needs, a dedicated scraping API like Easyparser provides the speed, reliability, and catalog-wide visibility necessary to maintain a competitive edge in the 2026 e-commerce landscape. By leveraging a specialized data extraction infrastructure, developers can bypass bureaucratic delays and focus on building the analytical tools that drive revenue.

Frequently Asked Questions (FAQ)

🎮 Play & Win!

Match Amazon Product 10 pairs in 50 seconds to unlock your %10 discount coupon code!